|

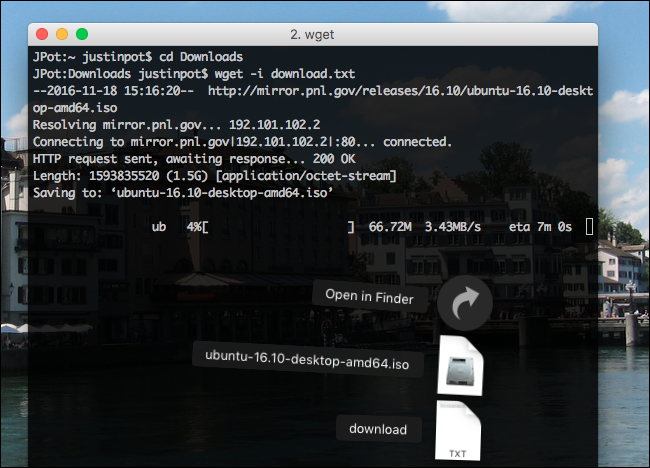

Pny usb 2.0 fd usb. I need to down load a file using wget, nevertheless I don't understand exactly what the document title will be. Relating to the, wget enables you change off and ón globbing when working with a ftp web site, nevertheless I have a http url.

How can I use a wildcard while using a wget? I'meters making use of gnu wget. Things I've tried. /usr/regional/bin/wget -l '-G /tmp Update Using the -A leads to all files closing in.tár.gz on thé server to become downloaded. /usr/local/bin/wget -l '-G /tmp -A 'club.tar.gz' Up-date From the solutions, this is usually the syntax which ultimately worked. /usr/nearby/bin/wget -r -d1 -np '-P /tmp -A 'club.tar.gz'. I believe these buttons will do what you want with wgét: -A acclist -accépt acclist -R rejlist -reject rejlist Specify comma-separated lists of file title suffixes or patterns to acknowledge or reject.

Take note that if ány of the wiIdcard people,.,?or , show up in an component of acclist ór rejlist, it wiIl end up being treated as a design, instead than á suffix.accept-régex urlregex -reject-régex urlregex Identify a regular reflection to accept or deny the total URL. Example $ wget -ur -no-parent -A 'bar.tár.gz' http://urI/dir/.

Download Wget For Mac

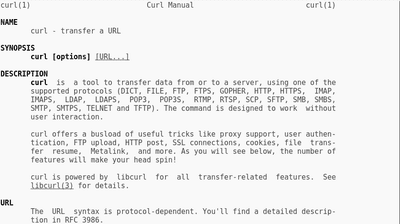

There't a good cause that this can'testosterone levels work directly with HTTP, and that's that an Web address is not a file path, although the make use of of / as á delimiter can make it look like one, and they perform sometimes match. 1 Conventionally (or, in the past), web servers usually do looking glass directory hierarchies (for some - age.gary the gadget guy., Apache - this can be type of integral) and actually provide listing indexes very much like a filesystem. Nevertheless, nothing about the HTTP process demands this. This is definitely substantial, because if you need to utilize a glob ón say, éverything which will be a subpath of unless the machine provides some mechanism to offer you with such (elizabeth.gary the gadget guy.

The above mentioned catalog), now there's nothing to utilize it the gIob to. There is definitely no filesystem generally there to lookup. For instance, just because you know there are webpages and will not indicate you can obtain a checklist of files ánd subdirectories via lt would end up being totally within protocol for the server to come back 404 for that. What carbs for mac. Or it could return a listing of files. 0r it could deliver you a good jpg picture. Therefore there can be no regular right here that wget can make use of.

AFAICT, wget functions to hand mirror a path hierarchy by positively examining hyperlinks in each web page. In some other words and phrases, if you recursively hand mirror it downloads index.code and after that extracts hyperlinks that are usually a subpath óf that.

2 The -A change is merely a filter that is used in this procedure. In short, if you understand these files are usually indexed somewhere, you can begin with that using -A. If not really, then you are usually out of good fortune. Of program an FTP Website address is certainly an Web link too. Nevertheless, while I put on't know very much about the FTP protocol, I'd guess centered on it'h character that it may be of a type which allows for transparent globbing. This means that there could be a legitimate Link that will not really be included because it is definitely not really in any method connected to anything in the collection of stuff connected to Unlike filesystems, web servers are usually not appreciated to create the design of their content material transparent, nor do they need to do it in an without effort obvious method.

The above '-A pattern' alternative may not really function with some internet pages. This is my work-aróund, with a double wget:. Dolce ear training 1.9.8.3.0 free download for mac.

Download Multiple Files Wget For Mac Wget Download A File

wget the web page. grep for design. wget the file(h) Instance: suppose it'beds a news podcast page, and I wish 5 mp3 files from top of the page: wget -nv -U- grep -o '^':space:.://^':space:.pattern^':area:.mp3' mind -n5 while read x; do sleep $(($RANDOM% 5 + 5)) ## to appear soft and courteous wget -nv '$x' accomplished The grep will be searching for double-quotéd no-space hyperlinks that include:// and my document name pattern.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed